My Role

Solo developer, full ownership — architecture, backend, frontend, database design, infrastructure, and ongoing operations. I ran discovery with the brokerage owner, translated business requirements into a technical plan, and built the entire system from scratch. Ongoing 24-month retainer engagement covering this platform plus a public marketing site.

The brokerage had no engineering resources and no budget for enterprise MLS software. Their competitive advantage — information timing — was leaking through group texts and hallway conversations. I built around that reality: a tool that plugged directly into the workflows they already had (including a Google Sheets sync for agents who refused to leave spreadsheets) while adding the search, enrichment, and access control they couldn't get from off-the-shelf tools.

Approach

Go backend with clean architecture — strict layering with dependency injection and no framework magic. Four layers: HTTP handlers (Chi v5 router with middleware for JWT auth, structured logging, CORS, rate limiting), usecase layer (business logic for auth, listings, search, IDX sync, notifications, reporting), domain layer (entity definitions and repository interfaces for users, listings, properties, agents, brokers, offices, media), and adapter layer (PostgreSQL repositories via pgx v5 with connection pooling, Redis cache, OAuth providers).

PostgreSQL 16 with PostGIS for the data layer. 618-line initial migration covering 20+ tables. Listings use a state machine (coming_soon → active → pending → sold/withdrawn/expired) with transitions validated in the service layer and logged to listing_status_history for audit compliance. Properties carry PostGIS geometry columns with automatic ST_Point calculation via database triggers, plus full-text search vectors (tsvector) weighted across street address, subdivision, and county.

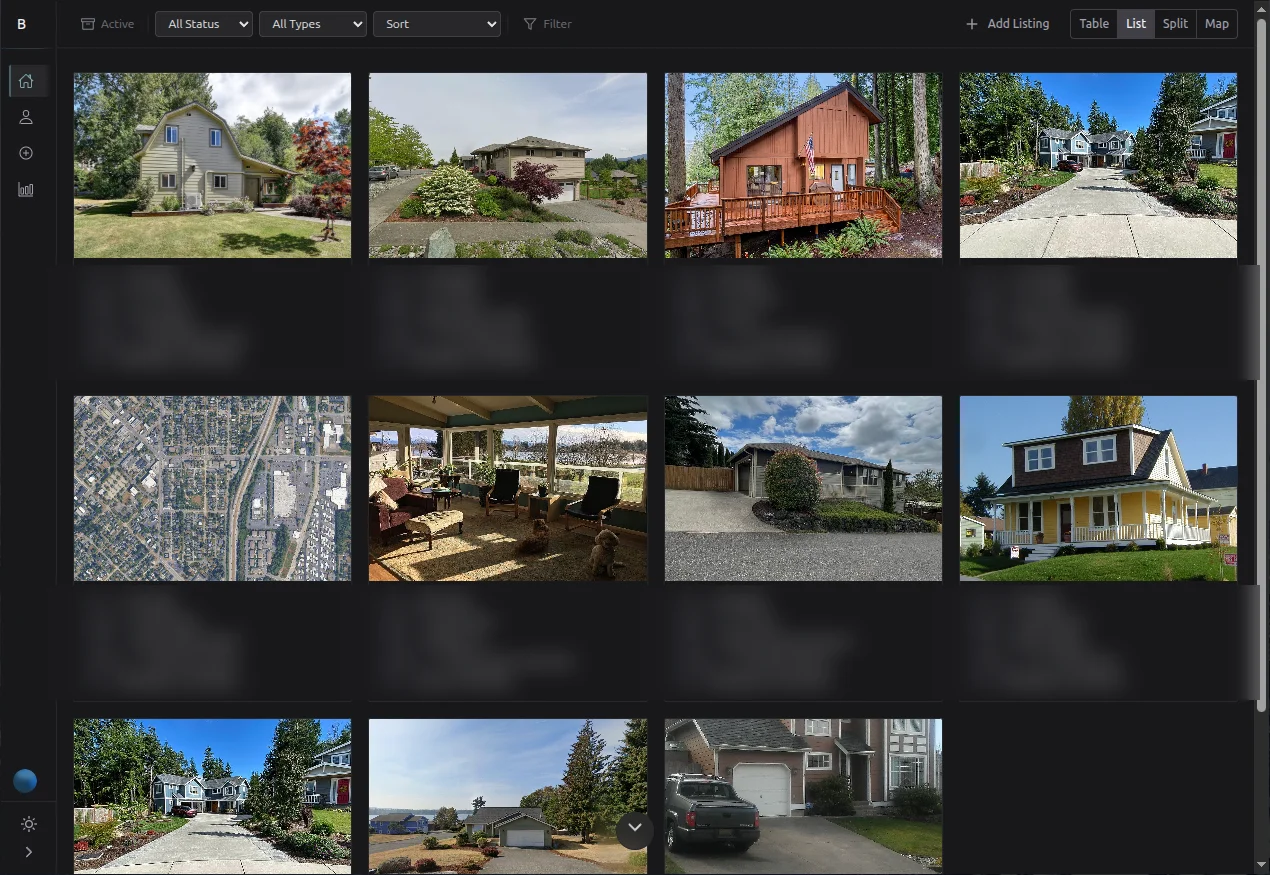

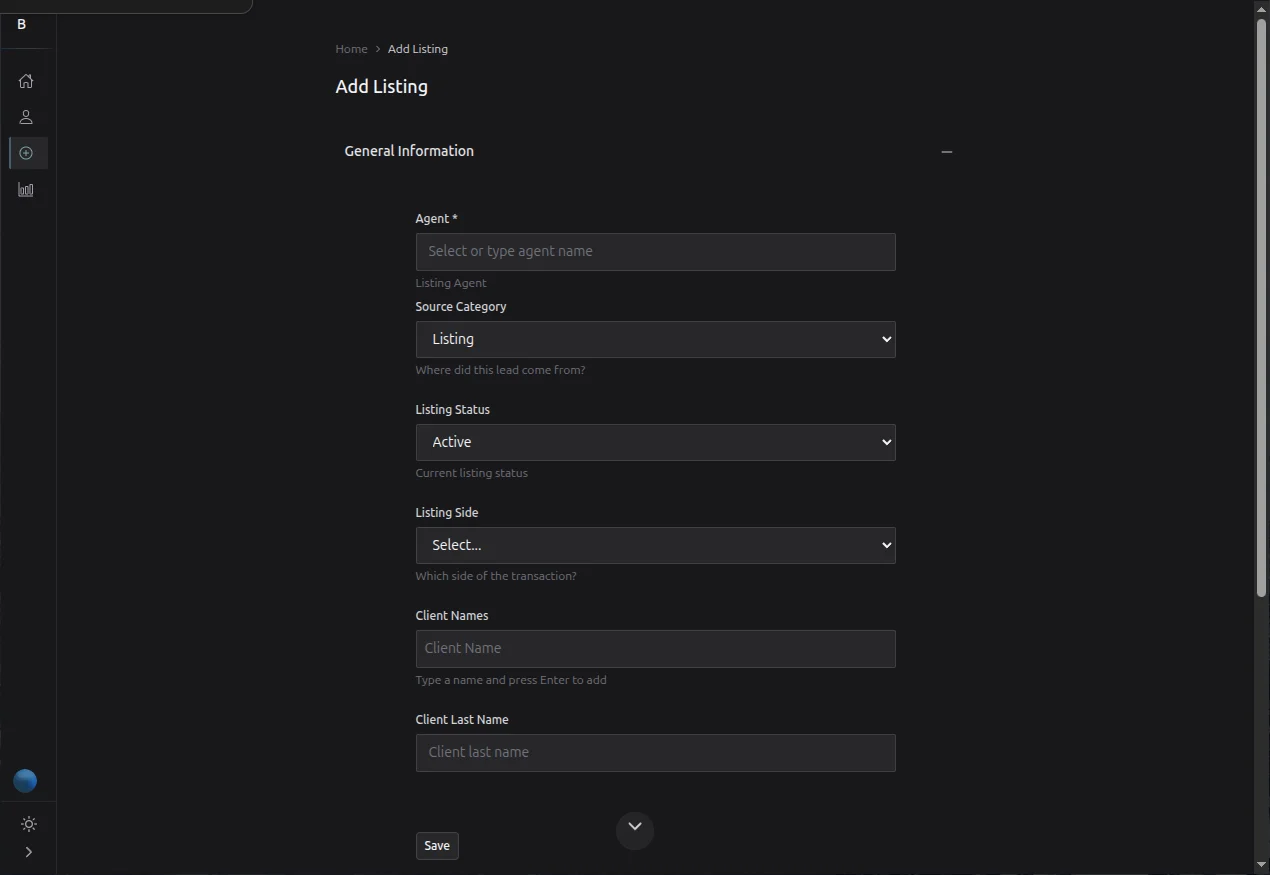

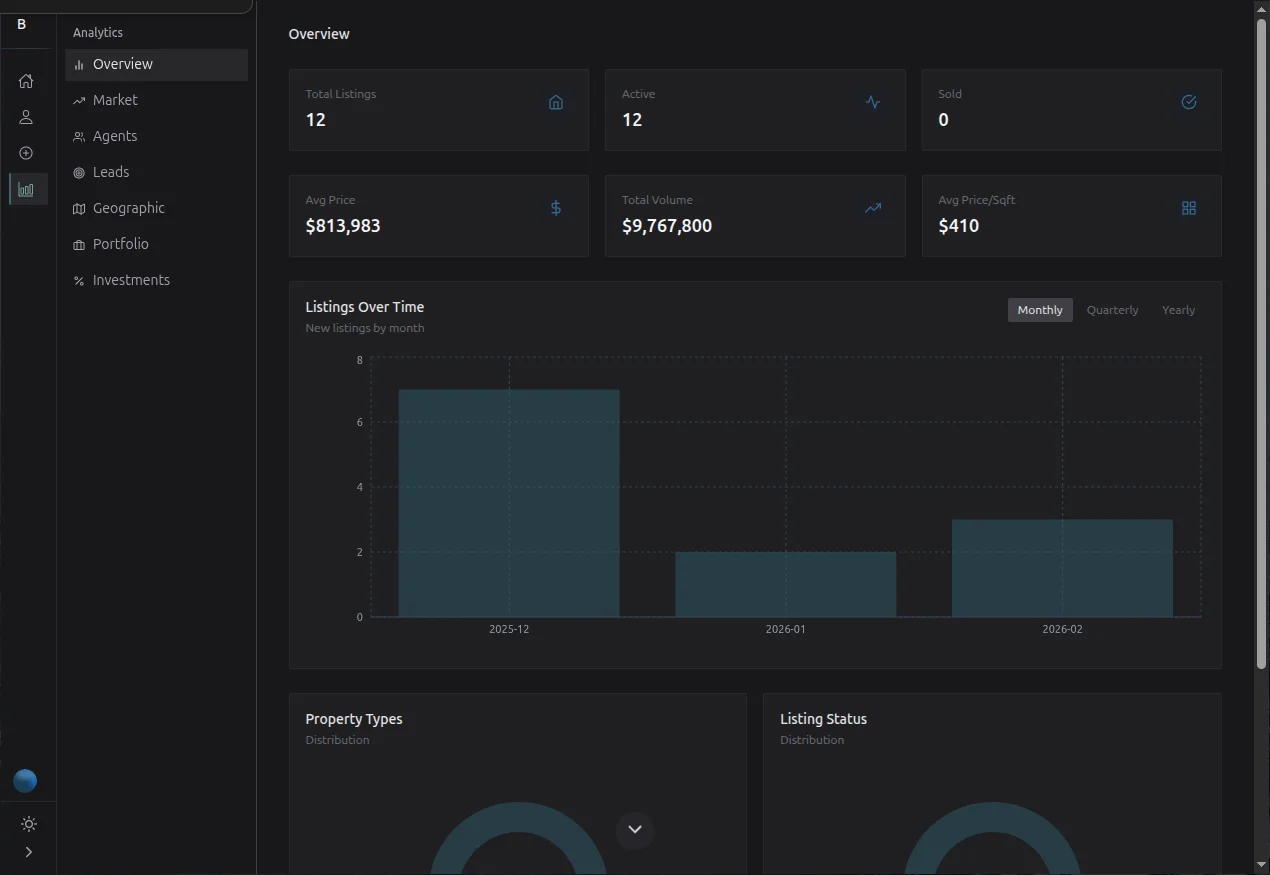

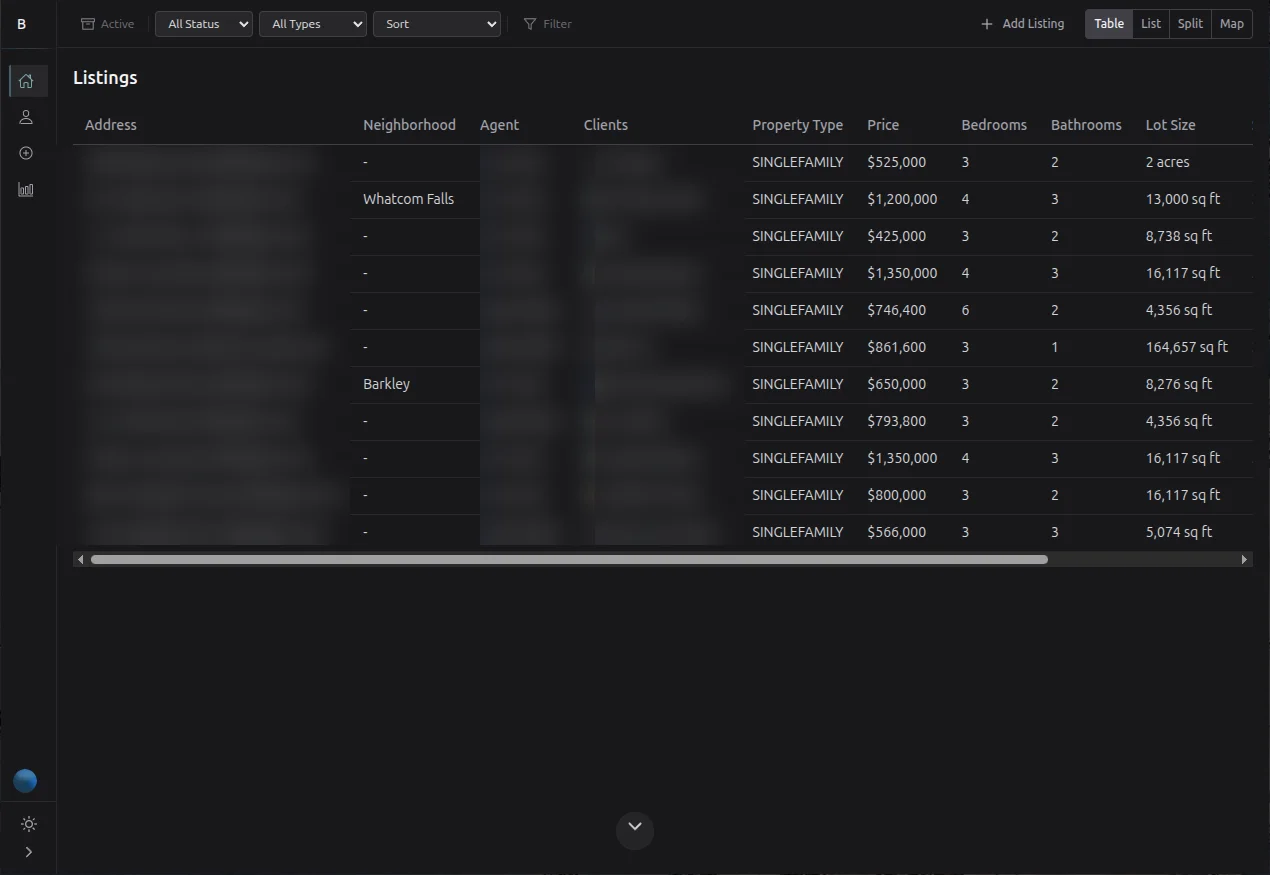

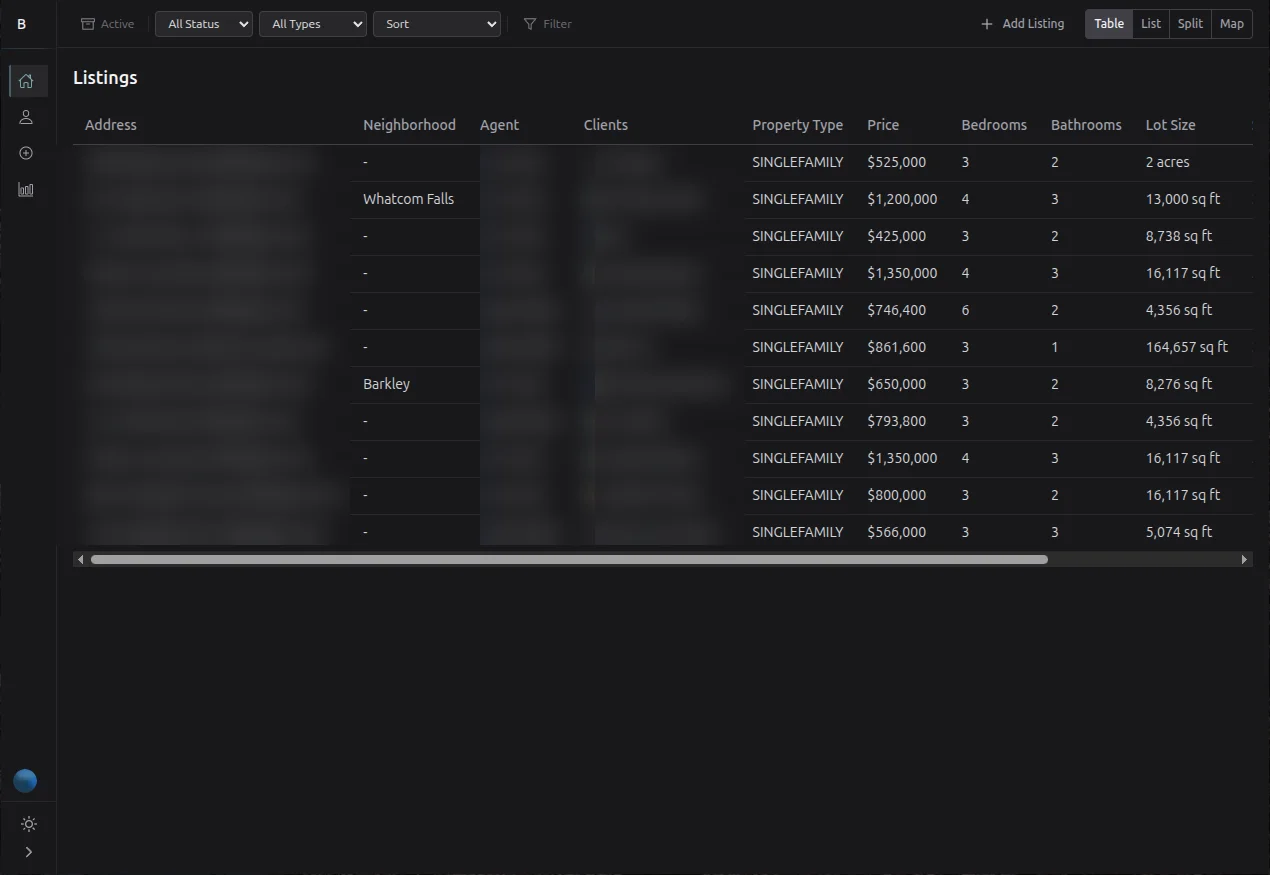

React 19 frontend with Vite, TypeScript, Zustand for auth state, TanStack React Query for server state, React Hook Form + Zod for validation, and Tailwind v4. The UI includes 7 analytics views, advanced multi-faceted search and filtering, Zillow auto-enrichment on address entry, and Google Sheets sync for agents who still want spreadsheets.

Key Decisions

Go over Node.js for the backend. The system handles real-time listing data, geospatial queries, and IDX feed syncing on 15-minute intervals. Go gave me type safety at compile time, a ~20MB Alpine container for deployment, native concurrency for the sync workers, and minimal runtime overhead. I considered Node with TypeScript, but for a backend with this much data processing and no need for SSR or a shared frontend language, Go was the cleaner choice.

PostGIS over application-level geo filtering. Radius-based search, bounding-box queries for map viewports, and exact coordinate lookups — all pushed down to GIST-indexed geometry columns. Database triggers compute geometry from lat/lng on insert and update, keeping the application layer free of spatial math. I evaluated Elasticsearch for search but the listing volume didn't justify the operational overhead — PostgreSQL's tsvector with English stemming handles full-text search well at this scale.

Four-role RBAC baked into JWT claims. Admin, broker, agent, and consumer roles — each with different visibility into listings, search results, and analytics. The brokerage owner needed to control who saw pre-market listings. JWT claims carry role, agent ID, broker ID, and office ID so every handler enforces permissions without additional database lookups. Refresh tokens are stored as bcrypt hashes, invalidated after use via rotation, and cleaned up on password change.

Combined repository pattern over a DI framework. Instead of injecting separate listing and property repositories into each usecase, I composed them into a CombinedListingRepository that satisfies multiple domain interfaces. This keeps dependency injection explicit and testable without pulling in a container framework — Go's interfaces made this natural.

What Was Hard

Listing state machine with audit compliance. Real estate listings have legally significant status transitions — you cannot move a listing from sold back to active, and every transition needs an audit trail. I built a state machine in the service layer that validates transitions against an allowed-transitions map and logs every change with the old value, new value, user, timestamp, IP, and user agent. Getting the edge cases right (expired listings being relisted, withdrawn-then-reactivated) took more iteration than expected.

IDX feed sync reliability. The system syncs with external MLS sources on 15-minute intervals via RETS/RESO APIs. These feeds are inconsistent — fields change names between MLS providers, timestamps vary in format, and connections drop. I built per-provider adapters with detailed sync logs that track records created, updated, deleted, and errored per run, so the brokerage owner can see exactly what happened on every sync cycle. Retries with exponential backoff handle transient failures.

Balancing enrichment sources. The system auto-enriches listings with Zillow Zestimates, tax records, and property details on address entry. But third-party data is unreliable — Zillow estimates lag, tax records have different update schedules per county, and rate limits vary. I ended up building a cache-first enrichment layer where each data source has its own TTL and staleness threshold, so the UI always shows the best available data without blocking on slow or unavailable APIs.

The Result

The platform is deployed via Coolify (self-hosted CI/CD) with staging and production environments. The brokerage has a central listing system with role-based access, geospatial search, auto-enrichment, 7 analytics dashboards, and a full audit trail — replacing the group texts and spreadsheets that were losing them deals. The Go backend deploys as a ~20MB Alpine container with 47 configurable environment variables following 12-factor app conventions via Viper.

The engagement is ongoing. The next phase is wiring the platform into the brokerage's CRM (Brivity) and client engagement tool (Homebot) to close the loop between listings, leads, and agent activity — building toward a single dashboard where the brokerage owner sees pipeline, production, and commissions in one screen instead of five disconnected tools.